Real-time video analysis using Microsoft Cognitive Services, Azure Service Bus Queues and Azure Functions

Cloud based Video Analysis is an upcoming field that strives to solve and automate video analysis in real time or near real time. The engine that drives the solution is set of cloud based APIs supported by Cloud providers such as AWS, Azure, Google Cloud etc. These APIs are built on top of Computer Vision, Face Recognition and Object Tracking. All these APIs are REST based and take a video frame or set of frames and return a JSON document that summarizes the analysis result and the percentage of confidence. To achieve real time or near real time analysis the enterprise solution needs to address the following constraints:

- Process streaming video input into smaller frame set and process them in parallel – This allows for efficient processing

- Use advanced heuristics and machine learning to minimize calls to API – the cloud APIs for cognitive services are priced by the number of calls. And hence using heuristics to infer results based on Machine Learning will reduce overall cost.

Use-case solved:

The solution we built here streams a live video stream from a series of traffic cameras operating simultaneously and trying to find vehicles that are infringing red lights and vehicles that are pulled over curbs. We also filter out sensitive content from video if the frames match the criteria and need to be displayed on the User Interface.

Solution:

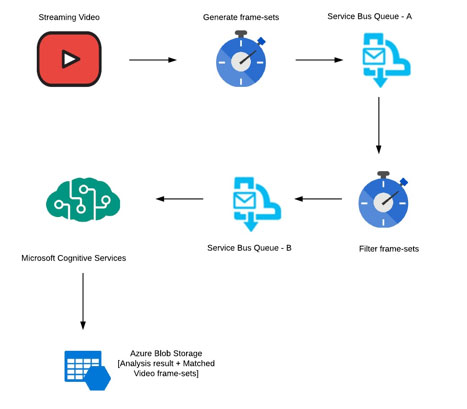

The streaming video is broken down into frame-set of 10 seconds. These frames are then queued up in a Azure Service Bus Queue. An Azure function then analyzes the frames for existence of objects using an open source Computer Vision library. The frames with no objects are not sent to Cognitive Services. We also do other heuristics and CV analysis to pre-determine if a call to Cloud API for cognitive services is needed at all. Once a frame-set is marked ready for cognitive services it is sent to a different Service Bus Queue. Another Azure function makes a call to cognitive services and gathers statistics of the frame set. Based on configurations, the azure function determines which frames are identified for the match and forwards them to another Service Bus Queue. A third azure function processes these frame-sets and blurs sensitive content on these frame-sets and stores them in Azure Blob Storage. The matched content can be viewed in a Node Js, Angular 2 based web application running in Azure Container Service.

Design:

Results & Conclusion:

- Able to achieve real time analysis with minimal API cost

- Able to scale horizontally for multiple video streams

- Able to achieve multiple analysis objectives on video streams

Share this:

CloudIQ is a leading Cloud Consulting and Solutions firm that helps businesses solve today’s problems and plan the enterprise of tomorrow by integrating intelligent cloud solutions. We help you leverage the technologies that make your people more productive, your infrastructure more intelligent, and your business more profitable.

LATEST THINKING

INDIA

Chennai One IT SEZ,

Module No:5-C, Phase ll, 2nd Floor, North Block, Pallavaram-Thoraipakkam 200 ft road, Thoraipakkam, Chennai – 600097

© 2023 CloudIQ Technologies. All rights reserved.